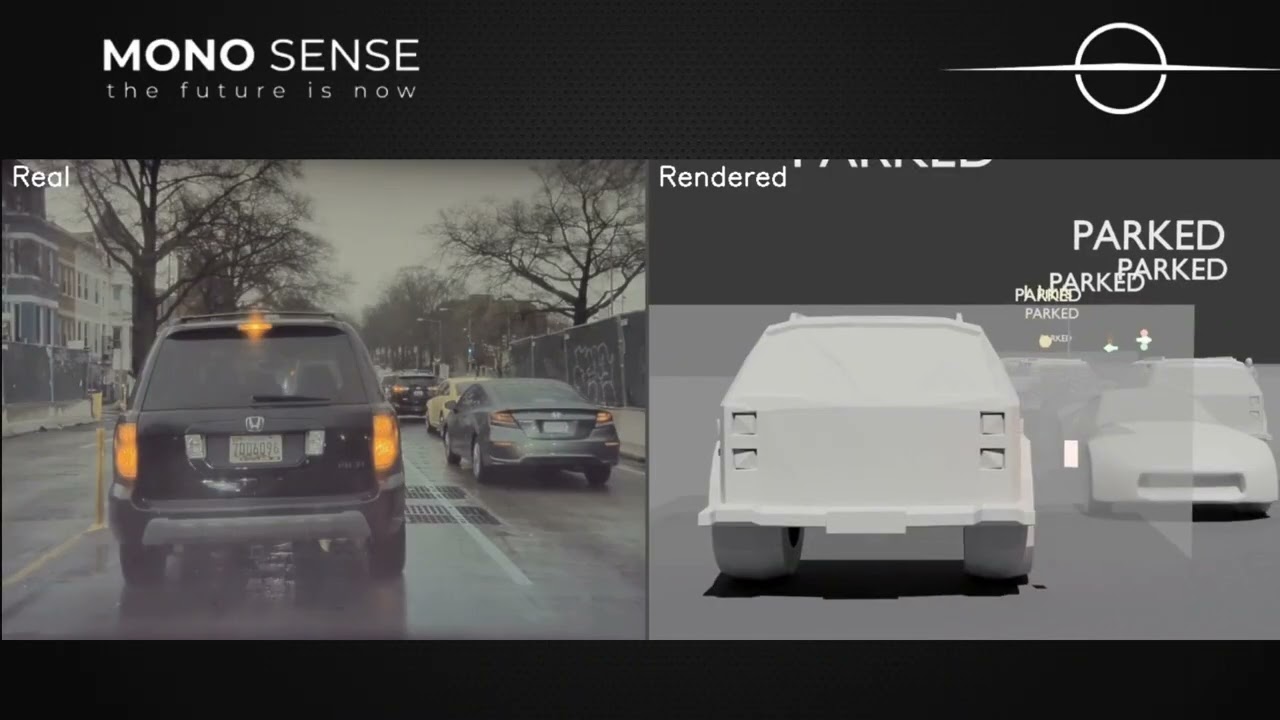

End-to-end autonomous vehicle scene understanding: monocular depth, multi-class detection, 3D tracking, velocity estimation, and collision prediction.

▶ Click to watch the full Product Pitch on YouTube

Code/

├── config.py # Camera calibration constants (front/left/right/back fisheye)

├── load_camera_params.py # Calibration loader utility

├── process_image.py # Single-image detection (YOLO + Depth Anything)

├── process_video.py # Single-video detection (YOLO + Depth Anything)

├── make_comparison.py # Side-by-side output comparison

├── run_pipeline.sh # Main pipeline orchestrator (Phases 3b–3h)

├── run_slurm.sh # SLURM job submission wrapper

│

├── Depth-Anything-V2/ # Depth estimation module

│ ├── run.py # Single-image depth inference

│ ├── run_video.py # Video-to-depth inference

│ ├── test_batch_depth.py # Batch depth testing

│ ├── app.py # Interactive Gradio app

│ ├── requirements.txt

│ └── depth_anything_v2/ # Model code (DPT + DINOv2 backbone)

│

├── Phase2and3/src/ # Phase 2 master detection pipeline

│ ├── detect_all.py # Master script: depth + pose + objects + lanes

│ ├── depth_estimator.py # Depth Anything V2 wrapper

│ ├── object_detector.py # YOLOWorld open-vocabulary detector

│ ├── pose_estimator.py # YOLOv8-pose pedestrian keypoint estimator

│ ├── road_marking_detector.py # HSV-based road arrow detector

│ ├── pick_best_frames.py # Frame quality selector

│ └── lane_detection/ # UFLD v2 (CULane ResNet-34) lane detector

│

├── fcos3d/ # FCOS3D monocular 3D object detection

│ └── fcos3d_test.py # FCOS3D inference (3D bbox, yaw, score)

│

├── fcos_smoothing/ # FCOS3D trajectory post-processing

│ ├── smooth_trajectories.py # EMA smoothing on 3D box sequences

│ └── script.sh

│

├── vehicle_tracking_fcos/ # Phase 3: velocity estimation & collision

│ ├── ego_motion_estimator.py # Ego-motion via KLT optical flow + depth

│ ├── absolute_velocity_estimator.py # Vehicle velocity (BoT-SORT + EMA)

│ ├── smooth_velocities.py # Temporal velocity smoothing (moving avg)

│ ├── pedestrian_velocity_estimator.py # Pedestrian absolute velocity (ego-relative)

│ ├── collision_detector.py # Time-to-collision prediction (5 s horizon)

│ └── visualize_velocity.py # Velocity vector + collision overlay on video

│

├── traffic_light_detection.py # Emissive LED traffic light classifier

└── traffic_sign_detection/ # LISA-dataset YOLO traffic sign detector

├── process_all_scenes.py # Batch scene processor

└── traffic_sign_video_inference.py # Per-video inference

Run via Phase2and3/src/detect_all.py. Produces one JSON file per frame.

| Step | Module | Method | Output fields |

|---|---|---|---|

| Depth estimation | depth_estimator.py |

Depth Anything V2 (vitl, 518 px input) |

depth_map (H×W float32, metres) |

| Vehicle/pedestrian detection | Pre-computed Phase 1 JSON | — | objects[] (class, bbox, position_3d) |

| Misc object detection | object_detector.py |

YOLOWorld (open-vocab) | objects[] + traffic cones, poles, signs |

| Pedestrian pose | pose_estimator.py |

YOLOv8-pose, 17 COCO keypoints | keypoints_3d[] per pedestrian |

| Lane detection | lane_detection/ |

UFLD v2, CULane ResNet-34, Bézier fit | lanes[] (points_3d, confidence) |

| Road markings | road_marking_detector.py |

White/yellow HSV + morphology | road_markings[] (type, bbox_2d, position_3d) |

| Traffic lights | traffic_light_detection.py |

HSV + CLAHE, 6× upscale | traffic_lights[] (state, confidence, position_3d) |

| Speed bumps | detect_all.py |

YOLOv5n TF SavedModel (640×640) | speed_bumps[] (bbox_2d, position_3d) |

| Vehicle sub-type | detect_all.py |

YOLOv8n-cls, ImageNet mapping | subtype per vehicle (sedan/suv/pickup) |

| Brake lights | detect_all.py |

Red HSV on lower half of vehicle crop | brake_light bool per vehicle |

Usage:

python Phase2and3/src/detect_all.py \

--video path/to/scene.mp4 \

--detections_dir Phase1/JSONFiles/scene1/ \

--out_dir output/scene1/ \

--step 1Orchestrated by run_pipeline.sh. Steps run sequentially per scene.

Video

└─► [3a] FCOS3D 3D Detection fcos3d/fcos3d_test.py

└─► [3b] Trajectory Smoothing fcos_smoothing/smooth_trajectories.py

└─► [3c] Ego-Motion vehicle_tracking_fcos/ego_motion_estimator.py

└─► [3d] Vehicle Velocity absolute_velocity_estimator.py

└─► [3e] Velocity Smoothing smooth_velocities.py

└─► [3f] Pedestrian Velocity pedestrian_velocity_estimator.py

└─► [3g] Collision Detection collision_detector.py

└─► [3h] Visualization visualize_velocity.py

3a — FCOS3D Inference (fcos3d/fcos3d_test.py)

python fcos3d/fcos3d_test.py \

--video scene.mp4 \

--config fcos3d_r101_caffe_fpn_gn-head_dcn_2x8_1x_nus-mono3d.py \

--checkpoint fcos3d_r101_caffe_fpn_gn-head_dcn_2x8_1x_nus-mono3d_finetune_20210717_095645-8d806dc2.pth \

--calib-mat <path/to/front.mat/derived/from/calibration.mat> \

--run-name scene1 --skip-frames 0Output: frame_XXXXXX.json per frame with objects[]{label, score, bbox_3d[x,y,z,w,h,l,yaw]}

3b — Trajectory Smoothing (fcos_smoothing/smooth_trajectories.py)

python fcos_smoothing/smooth_trajectories.py \

--input-dir fcos_output/scene1/ \

--output-dir fcos_output/scene1_smoothed/ \

--ema-alpha-pos 0.35 --ema-alpha-size 0.2 \

--distance-thresh 4.0 --max-missed 2 --interp-gap 2 --fps 10.0Tracking via 2D bbox IoU; EMA on position, size, and yaw; linear interpolation across gaps ≤ 2 frames.

3d — Vehicle Velocity (vehicle_tracking_fcos/absolute_velocity_estimator.py)

python vehicle_tracking_fcos/absolute_velocity_estimator.py \

--input-dir fcos_output/scene1_smoothed/ \

--output-dir velocity_output/scene1/Uses YOLOv8n + BoT-SORT for track IDs, ego-corrected Δposition/Δt per track.

Output fields per object: track_id, speed_m_s, velocity_vector [vx,vy,vz], position_3d.

3e — Velocity Smoothing (vehicle_tracking_fcos/smooth_velocities.py)

python vehicle_tracking_fcos/smooth_velocities.py \

--input-dir velocity_output/scene1/ \

--output-dir smoothed_velocities/scene1/ \

--window-size 30 --distance-thresh 5.03f — Pedestrian Velocity (vehicle_tracking_fcos/pedestrian_velocity_estimator.py)

python vehicle_tracking_fcos/pedestrian_velocity_estimator.py \

--ego-vel-dir smoothed_velocities/scene1/ \

--out-dir pedestrian_velocities/scene1/ \

--fps 36.0 --pos-window 10 --vel-window 10Detects pedestrians by label == 7. Absolute velocity = relative velocity − ego velocity.

3g — Collision Detection (vehicle_tracking_fcos/collision_detector.py)

python vehicle_tracking_fcos/collision_detector.py \

--input-dir pedestrian_velocities/scene1/ \

--output-dir collision_results/scene1/ \

--time-horizon 5.0 --radius 2.5 --parked-thresh 1.0Predicts closest approach via linear extrapolation over a 5-second horizon.

Objects with speed < 1.0 m/s are classified as parked and excluded.

Collision flag per object smoothed by majority vote across frames.

3h — Visualization (vehicle_tracking_fcos/visualize_velocity.py)

python vehicle_tracking_fcos/visualize_velocity.py \

--video scene.mp4 \

--velocity-dir collision_results/scene1/ \

--calib-mat <path/to/front.mat/derived/from/calibration.mat> \

--out-video annotated_scene1.mp4| Space | X | Y | Z |

|---|---|---|---|

| Camera (raw) | Right | Down | Forward |

| Phase 2 JSON output | Right | Up | −Forward |

| Depth range (valid) | — | — | 0.5 – 120 m |

| Package | Used by |

|---|---|

torch, torchvision |

Depth Anything V2, UFLD, FCOS3D |

ultralytics |

YOLOWorld, YOLOv8-pose, YOLOv8n-cls, YOLOv8n (BoT-SORT) |

mmdet3d |

FCOS3D inference |

opencv-python |

All video I/O, HSV detectors, KLT flow |

tensorflow |

Speed bump YOLOv5n SavedModel |

scipy, scikit-image |

Morphology, Bézier fitting |

transformers |

Depth Anything V2 metric model |

Install Phase 2/3 dependencies:

pip install torch torchvision ultralytics opencv-python scipy scikit-image transformers

# For FCOS3D: follow MMDetection3D install guide (use a separate virtual environment)Intrinsic parameters for all four cameras are defined in config.py as dicts with keys:

fx, fy, cx, cy, k1, k2, p1, p2 (distortion).

Used by load_camera_params.py to construct the 3×3 camera matrix K passed to all 3D projection steps.